For many, Salesforce is still synonymous with CRM. It used to be the case that Salesforce was simply a place to access customer data. Used primarily by sales and service reps and their managers, it provided users with a unified view of customers and their interactions with the company. This, in turn, cemented Salesforce’s role as the platform where users interact with data and run automations based on that same data.

However, Data Cloud and Agentforce are steering the platform into a new direction. Now, simply having data that’s up-to-date and accurate is no longer enough. Data must be actionable. In other words, it must be unified, secure, accessible, and relevant for users, automations, and agents. This bends the meaning of “Single Source of Truth” (SSoT), as the capabilities that facilitate data usage become more important than the data itself.

This piece explores why Salesforce’s role as the source of truth is changing, and how admins and architects can turn that shift into an advantage.

Beyond CRM: How Salesforce Became an Activation Layer

Salesforce stopped being “just a CRM” the moment agentic AI entered the picture. With Agentforce, we’re no longer talking about harmless AI add-ons, such as Einstein Lead or Opportunity Scoring. Agentic AI carries strong business impact potential – both for the good and the bad. Unlike AI assistants or copilots, agents do not sit idly waiting for humans to command them. They operate autonomously based on their instructions and the data available to them.

This presents a huge shift in responsibility. If your data is stale, fragmented, or ungoverned, these agents will happily automate the wrong thing at scale. In other words, the actionability of your data now determines the quality of your AI. The days of “good enough” data hygiene are over. Every gap, every delay, every blind spot becomes an automation risk. Your architecture isn’t just supporting business processes anymore – it’s shaping autonomous decisions in real time.

Salesforce used to require replicating data from external systems via APIs to power its automations. This too has changed. With bring-your-own-lake (BYOL) and file federation (zero-copy), Data Cloud can activate data sitting in your data lake without duplicating it. That means you can keep governance where it belongs, avoid compounding storage costs, and still deliver real-time personalization or AI-driven actions. You no longer need to centralize and consolidate everything. Activation beats aggregation.

Finally, Salesforce isn’t just about structured CRM data anymore. Data graphs let you unify diverse datasets, while vector search turns unstructured data sources into first-class citizens. Why does that matter? Because AI-powered workflows don’t just need rows and columns – they need context. And context lives in conversations, documents, and knowledge bases. This is where Salesforce is headed as an activation layer.

What Powers the Salesforce Activation Layer

Speed matters with agentic AI – even more than before. Contrary to what you may think, agents are slow thinkers by nature. In fact, they are built that way on purpose. Agents engage in System 2 thinking, enabling complex problem solving and self-reflection. However, this also slows down their reasoning. Data activation has to be fast to avoid agents becoming awkwardly slow. Under the hood, Salesforce Platform and Data Cloud bring together key capabilities to make data usable the instant it matters.

Prepare Your Data for AI in Advance

If you’ve worked with SQL queries in the past, you know that joins take time. A join combines two or more datasets together using a shared key, i.e. a common value found within one column in both datasets. This requires processing, and processing causes latency. If this querying happens at the moment of action, extensive joins lead to slowdowns or timeouts.

To counteract this, Data Cloud enables preparing datasets in advance using Data Graphs. A Data Graph is a prebuilt, query-friendly view that combines and structures multiple datasets in advance. This allows insights and activations to run in near real time. Instead of stitching data together at runtime, you build the relationships once and let Data Cloud serve up the graph whenever it’s needed.

Ingest Events in Real-Time

10 years ago, “real-time” was counted in minutes, whereas now anything with more than a few milliseconds of latency is considered merely “near-real-time”. Real-time ingestion (streaming) keeps your activation layer alive. Instead of waiting for batch jobs to catch up, streaming updates flow straight into identity resolution, insights, and segments the moment they happen. That means the second a customer clicks, buys, or calls, Data Cloud can trigger a flow, an Apex class, or a headless agent. The downside is that real-time data operations cost significantly more credits than batch processing.

Activate But Don’t Replicate

With zero-copy federation, Data Cloud can query data directly in Snowflake, AWS, or other data lakes without pulling it into Salesforce. That means less redundancies, less storage costs, and less synchronization issues. Salesforce uses Apache Iceberg to read where the data lives and make it actionable in real time. Additionally, Data Cloud can write back updates directly to the source. The result: a unified activation layer without the cost and risks of replication.

Embed Meaning into Your Datasets

Up until now, most company data has been locked away in an unstructured format: emails, memos, manuals, and presentations. Data Cloud makes this data actionable. With Vector Search, Data Cloud can chunk and embed documents, emails, and transcripts so they’re searchable by meaning, not just keywords. That means your Agentforce agents and Tableau dashboards can pull context from PDFs or call notes as easily as they query a contact record.

Retrieve Data at Inference Time

With Prompt Builder, you can inject CRM and Data Cloud data into AI prompts using retrievers and RAG (Retrieval-Augmented Generation). Retrievers act as no-code bridges between your search indexes and prompt templates, pulling the right data in real time when an agent infers a response. The resulting augmented prompt is protected by the Einstein Trust Layer, ensuring that sensitive data isn’t exposed or used for model training.

Stop Fighting Your Data – and Start Activating It

A recent Salesforce Ben post revealed that most organizations’ CRM data isn’t ready for AI agents, citing incomplete data (38%), inconsistent formats (37%), and outdated records (37%). Numbers like these can feel paralyzing, but they underscore a hard truth: poor data governance is a major risk. You can adapt, ignore it at your peril, or freeze in alarm. Either way, you can’t escape the consequences of bad data hygiene.

If you want to break the stalemate, take a pragmatic approach: one that acknowledges the risks of poor data hygiene while leveraging Salesforce’s latest data and AI capabilities. Below are three reference patterns for Salesforce architecture that tap into the powerful activation features released between 2023 and 2025.

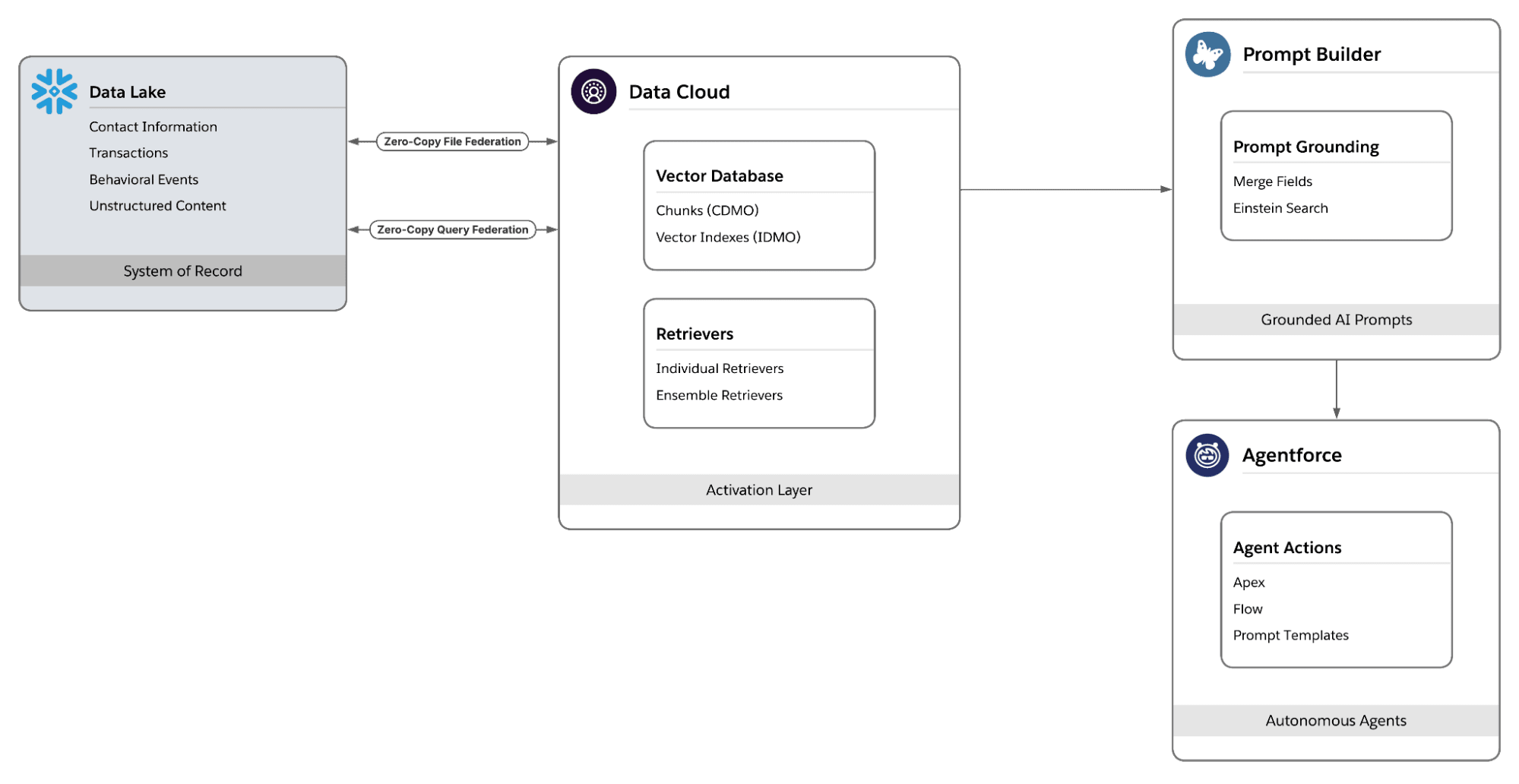

Grounded Agents on Federated Data

In the above diagram, Snowflake is your system of record. You don’t copy data into Salesforce – you activate it in place. You use Zero-Copy File Federation for structured data to ensure scalability and performance with high data volumes. This allows Data Cloud to read Snowflake tables directly and expose the fields you need for agentic reasoning and automations. For more complex needs and unstructured data, Query Federation is the way to go.

You build vector indexes in Data Cloud so agents match meanings in your knowledge base with user intent. Prompt Builder grounds Agentforce with this data, injecting the right CRM/Data Cloud context and real‑time search results into each prompt. Finally, trigger outcomes with Agentforce actions to update records, create tasks, or add campaign members, so insights and agent decisions translate into immediate action.

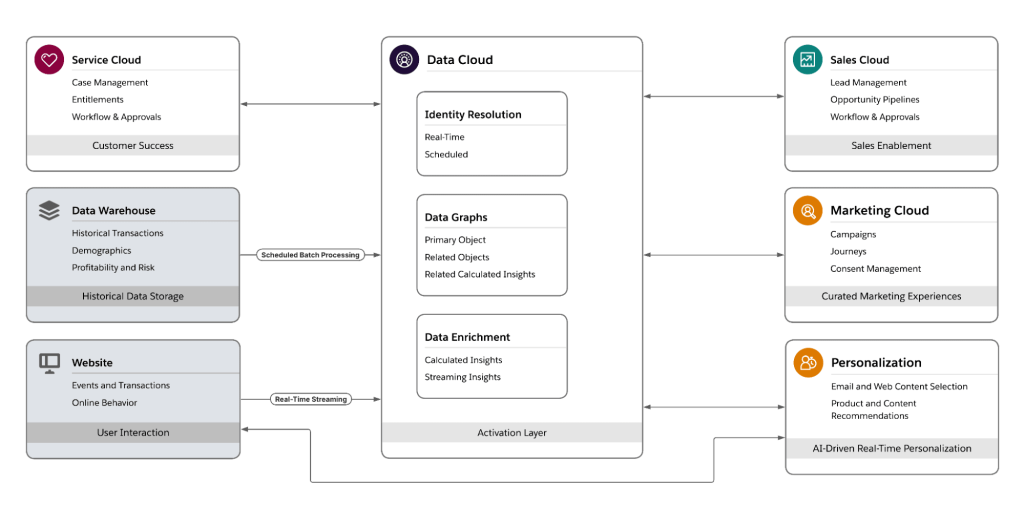

Real-Time Customer 360 for RevOps Journeys

When identities span Service Cloud and third‑party sources (e.g. website and data warehouse), start by unifying individuals with identity resolution that runs in real-time and on a schedule for bulk processing. Feed that output into real‑time data graphs, so you have a materialized view of each person at the moment of activation. Compute real‑time insights (e.g., CLTV, churn risk, purchase intent) that update as new data arrives.

You then let those insights fire Data Cloud‑triggered flows to drive action across your stack. Create or update opportunities in Sales Cloud, and notify account owners of major service incidents. In Marketing Cloud, use Data Cloud data as triggers for automated journeys and as campaign segment criteria. Finally, Data Cloud can feed real-time experiences in Personalization, turning fresh signals into product or content recommendations.

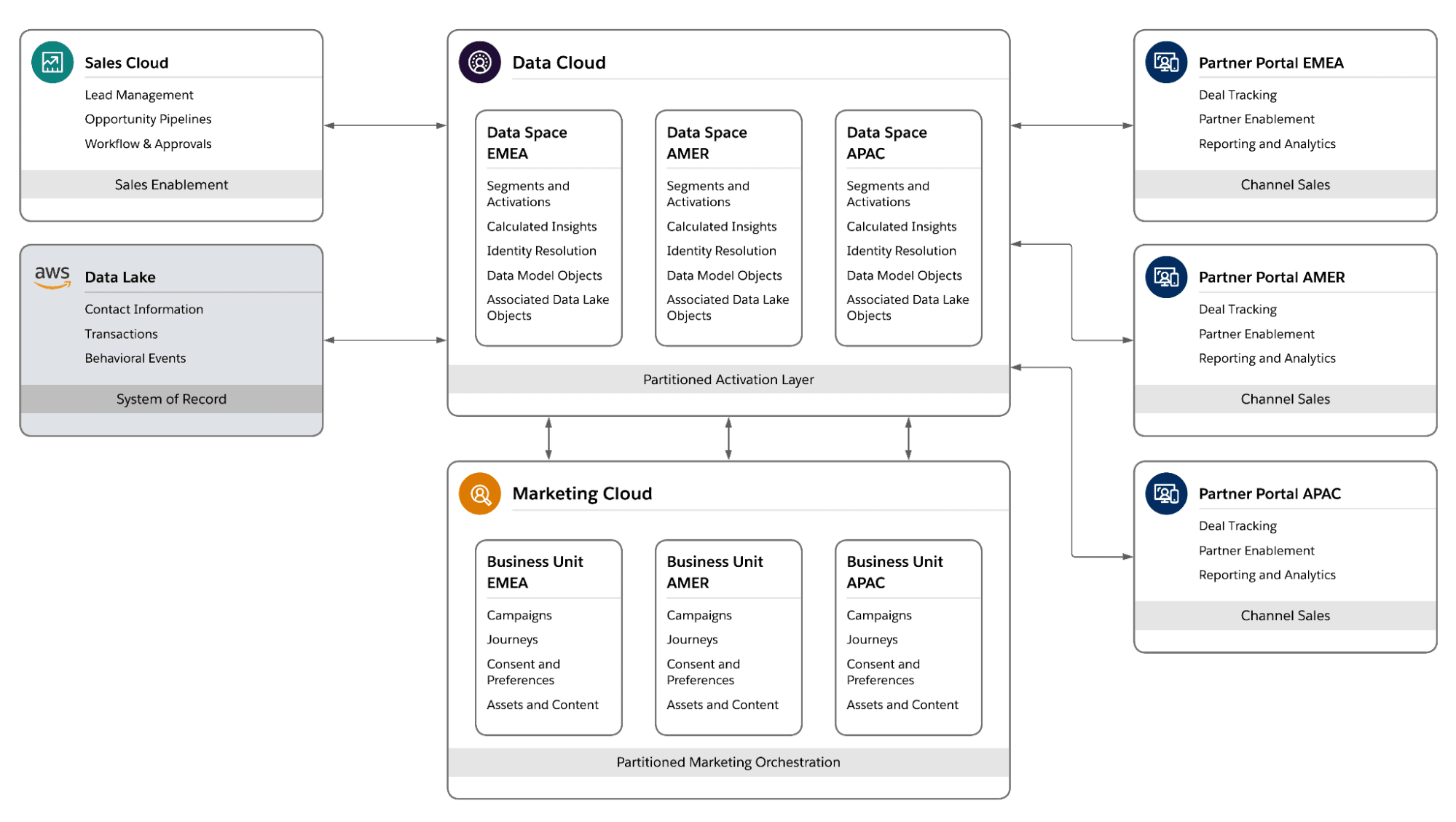

Multi-Brand, One Org

Data Cloud allows you to partition your data model to comply with regional regulations or company policy. Use Data Spaces to isolate brands, regions, or subsidiaries. Each Data Space is self-contained, with its own data, permissions, and configurations. This ensures that sensitive information stays isolated, while still benefiting from a shared integration architecture. While Data Spaces are meant for isolating data and metadata, they may share Data Bundles and connectors.

In the above reference architecture, we see a global Sales Cloud and an AWS data lake feed data into Data Cloud. This data is then filtered and partitioned to three regional Data Spaces, which in turn link to their corresponding Marketing Cloud Business Units and Experience Cloud portals. The result is a clean division of data that gives each region autonomy to alter its data model and configurations as needed.

Beware These Source of Truth Anti-Patterns

Stop dumping everything into Salesforce. Forcing all data into the platform in the name of “SSoT” is an outdated reflex. With Zero-Copy Federation, you can activate data where it lives and only ingest what’s truly needed, when it’s needed. The same principle applies to unstructured content: don’t blindly ingest and vectorize sensitive documents without masking and access controls. Since Salesforce record sharing and field-level access do not apply to Data Cloud objects, unstructured data can provide backdoor access to privileged data.

Forget “just in case” ingestion. Every pipeline carries cost and risk, whether you replicate or federate. If there’s no clear activation use case, don’t bring it in. Also, real-time ingestion may sound impressive, but it’s expensive and harder to govern. Use it where milliseconds matter – for everything else, batch is smarter and safer. And beware orchestration debt – point solutions and ad-hoc flows pile up fast, creating a tangle no one can manage. Design for modularity and reuse from day one, or your activation layer will collapse under its own weight.

Final Thoughts

The idea of a CRM as merely a data interaction platform has long since run its course. Platforms focused only on delivering a 360° view have either lost relevance or never achieved it – as was the fate of Zendesk in late 2025. Salesforce could have faced the same outcome without a drastic course correction. By making Data Cloud central to its strategy, Salesforce has redefined its role as a source of truth. It is no longer content with storing and surfacing customer insights.

Salesforce aims to be the hub that orchestrates agentic workflows and data activation across every business domain. It’s an ambitious vision, and Salesforce has the potential – but the real question is whether customers and partners can master the Platform and Data Cloud to make it real.