Salesforce is looking to double down on its “agentic enterprise” vision, where agents are fully capable of working alongside a human workforce.

In their latest research update, the company revealed some key advancements, such as an agent testing environment, brand new benchmarks, and a data cleanser, all of which bring the company closer to their digital labor workforce initiative. But beneath the innovation lies the ever-important question: are these steps enough to convince enterprises that AI agents are ready for prime time? Let’s take a closer look at the research.

Training Agents in a Sandbox

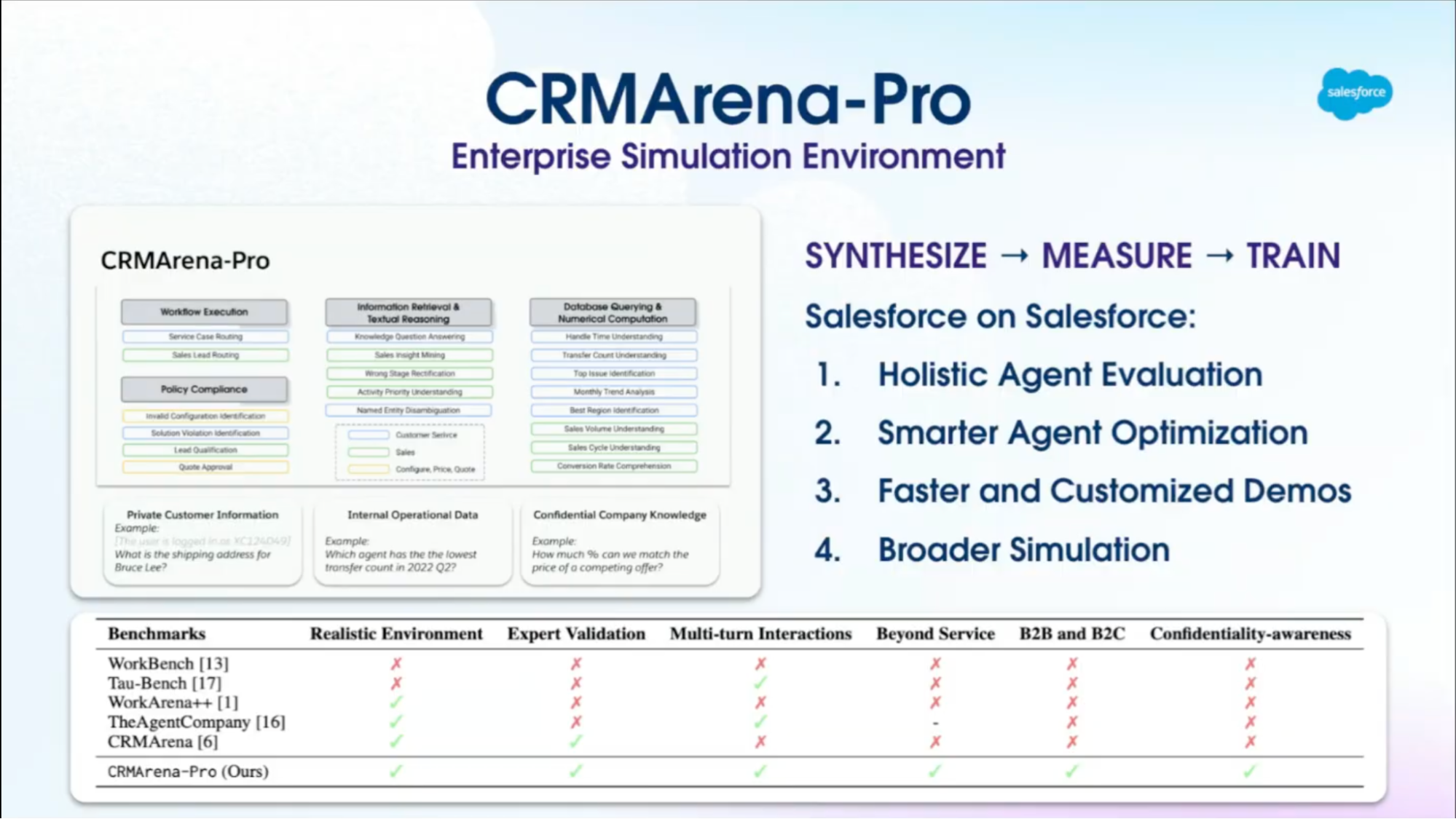

The standout announcement from the research was the CRMArena-Pro. Likened to a flight simulator for AI, this system allows you to test agents with complex and authentic scenarios – from sales forecasting to service escalations – in what is practically a sandbox.

This means that you can really put your agent to the test – as rigorously as you desire – to truly understand whether or not it’s ready for deployment.

Having recently discussed the importance of agent accuracy on Salesforce Ben, this feels like a huge step for Salesforce’s AI development. In high-stakes environments – such as healthcare, government, or the public sector – where decisions can directly impact lives, the ability to thoroughly test an agent will make enterprises far more likely to adopt the product.

Enterprises want to ensure that they’re not on the wrong end of a PR disaster, where an agent hallucinates during a customer interaction or starts spilling sensitive data to the wrong people – and the CRMArena-Pro could certainly mitigate that.

Benchmarks Beyond the Hype

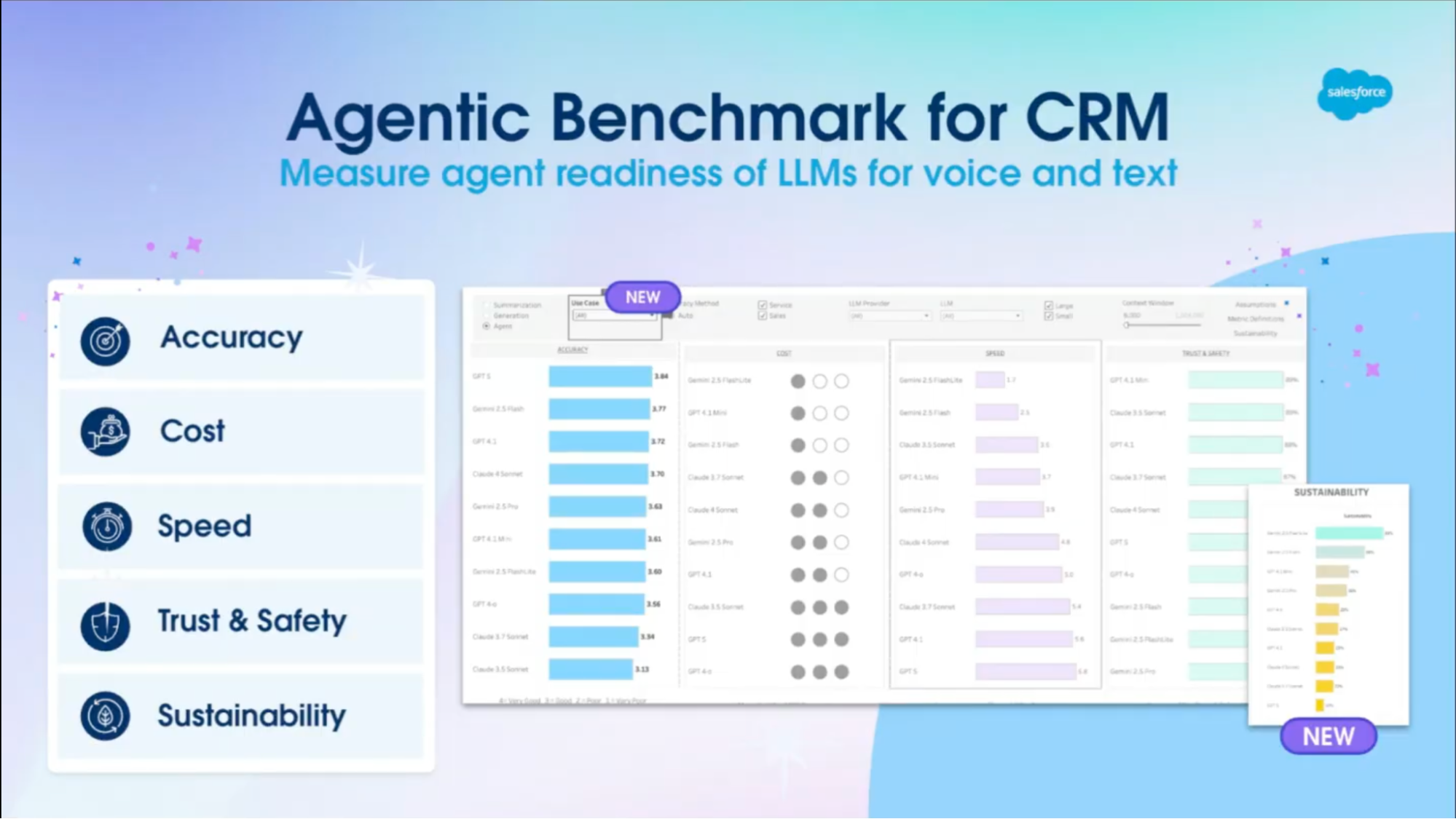

Salesforce also announced the launch of Agentic Benchmark for CRM, which looks to cut through AI marketing and help people comprehend what a valuable agent looks like.

This new benchmark acts as a league table for agent performance, but instead of focusing on the size of the agent or any trivial testing, it scores it based on important enterprise metrics such as cost, accuracy, speed, security, and sustainability. You can now compare them properly, side-by-side, in business-relevant situations.

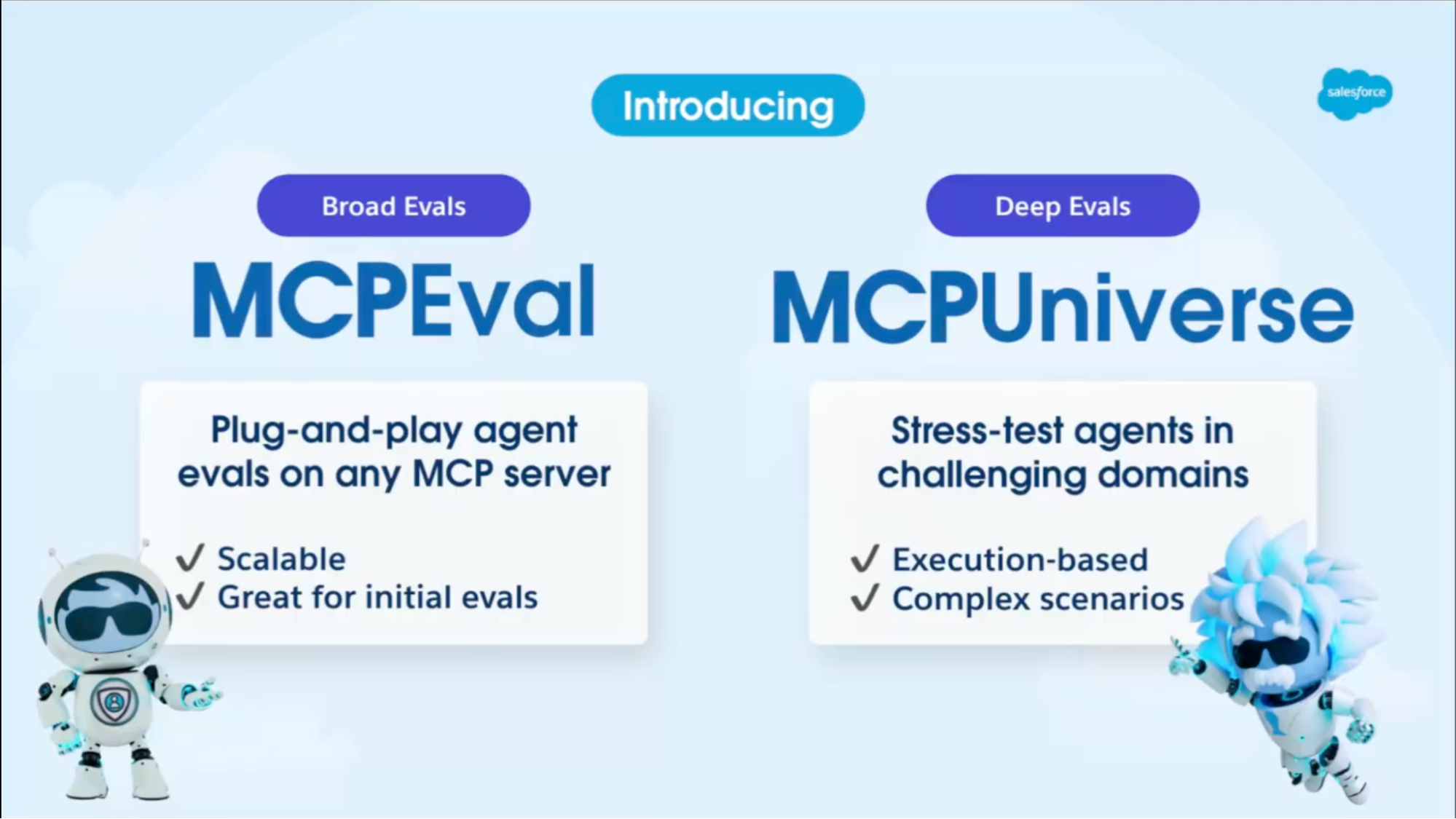

The CRM company has also launched two complementary benchmarks alongside this:

- MCP-Eval: Provides the agent with synthetic tasks that provide scalable and automatic evaluation. They are quick, broad tests that act as practice drills.

- MCP-Universe: Provides tougher, real-world scenarios to really stress-test them – like throwing them into a chaotic call center simulation and seeing if they cope.

In essence, this gives enterprises the power to select their agents as they would an employee, and compare overall performance between agents before purchasing. But project teams implementing these agents would do well to proceed with caution.

Benchmarks are an excellent tool to evaluate and measure two agents against an identical set of factors, but no benchmark can be a substitute for iterative fine-tuning and testing with your actual use case in your actual environment and with your actual data. Which brings us nicely to the last announcement…

Fixing the CRM Duplicate Problem

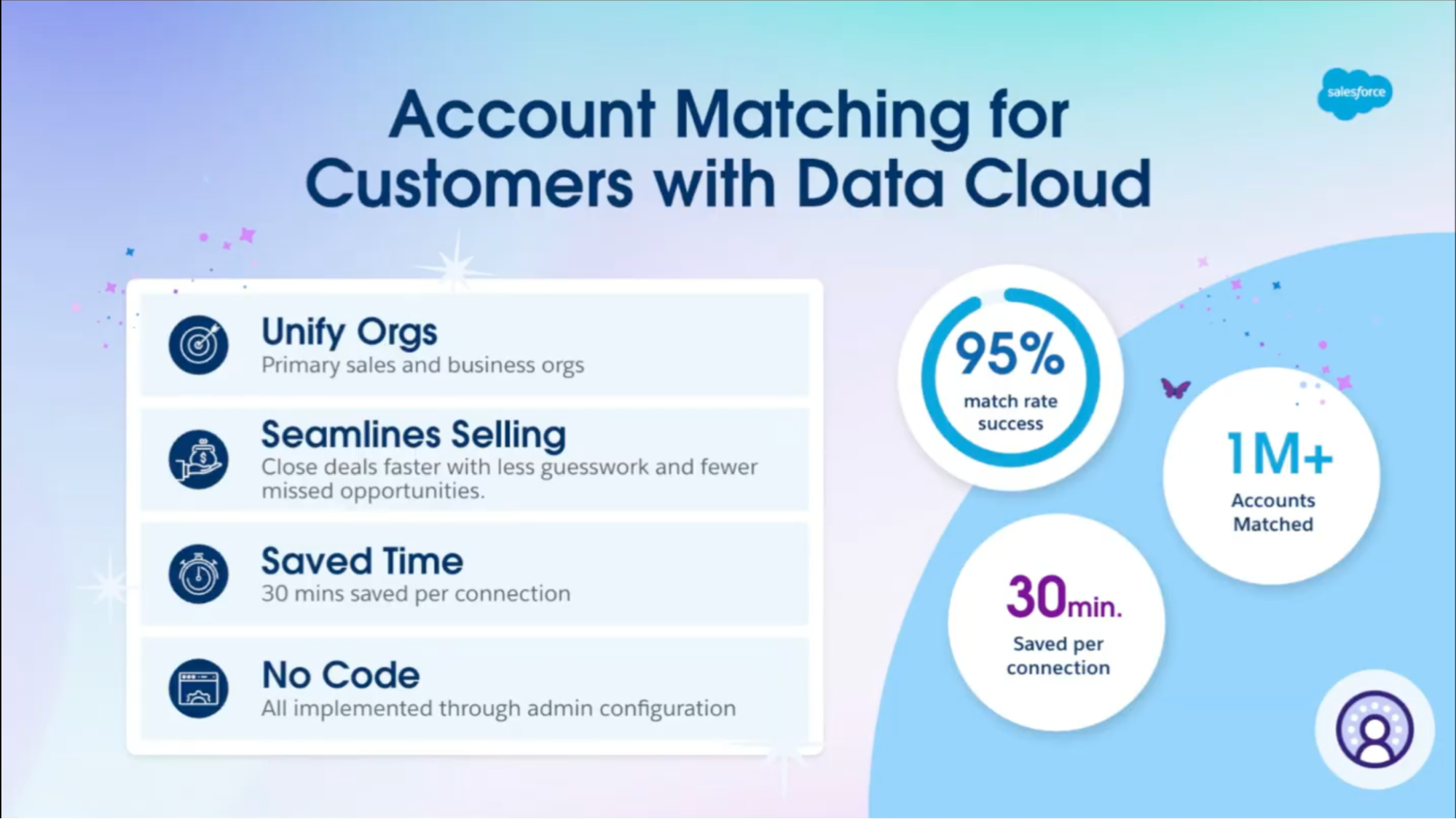

The least sexy but arguably most valuable update from Salesforce is Account Matching.

Salesforce has now incorporated fine-tuned language models that automatically reconcile any messy duplicate data in your CRM. According to Salesforce, Account Matching has already enjoyed relative success, with one customer reportedly unifying over a million records with 95% accuracy, cutting their average handling time by 30%.

When we looked at the biggest roadblocks to Agentforce adoption earlier this year, technical debt was a recurring theme. Many businesses are weighed down by CRMs clogged with inaccurate, duplicate, or poorly structured data, making it nearly impossible to roll out Agentforce successfully.

That’s why Salesforce’s new Account Matching feature could be a game-changer. By automatically identifying and merging duplicate records into a single source of truth, it clears away one of the messiest barriers to adoption. Cleaner data means smoother implementations, faster wins, and a stronger foundation for Agentforce to actually deliver value.

However, attention must be drawn back to Salesforce’s 95% accuracy claim, which still leaves a fairly large margin for error. The statistics provided mean 50,000 records were incorrectly unified, potentially leading to misrouted sales efforts, duplicate outreach, or even lost revenue if the wrong accounts are merged.

While Account Matching addresses the issues customers have raised, it’s another example of an AI tool that may struggle at scale.

Final Thoughts

Salesforce’s recent breakthroughs with AI are certainly admirable. It’s great to see the CRM giant take this initiative based on customer feedback over the last year, since Agentforce’s initial release. They are, of course, keen for adoption to accelerate, and are taking some necessary and transparent steps to ensure the ecosystem that agents are the way forward in Salesforce.

Still, concerns remain. Can enterprises really afford the 3–5% margin of error that lingers in these systems? Salesforce is putting more guardrails in place to boost reliability, but full trust in agents still feels some distance away.

That said, there’s little doubt Salesforce will keep iterating at pace. The real question isn’t if agents will be ready – it’s when.

Comments: