Assuming you have already conducted due diligence on your AI use case – ensuring that the problem cannot be addressed through simple heuristics, AI-driven insights genuinely benefit your use case, and the cost of being wrong is acceptable and does not compromise user trust or compliance – the conversation must naturally shift from “Should we use AI?” to “How do we use AI responsibly?”

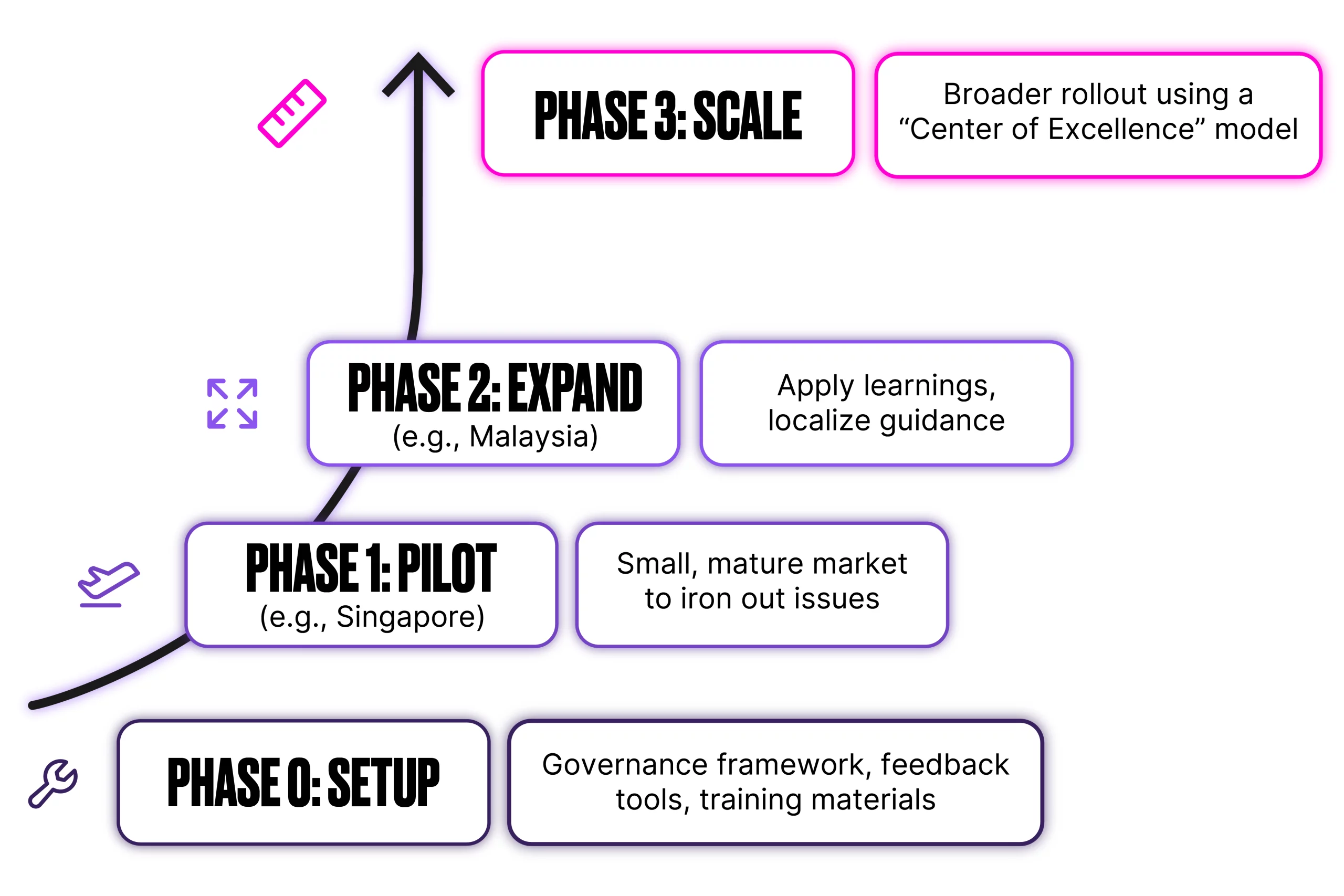

Here, we explore how to establish practical, user-friendly AI governance within Salesforce, using the example of deploying Einstein Lead Scoring across multiple markets like Singapore and Malaysia by learning how to implement feedback loops, monitor performance, and scale thoughtfully across regions.

Understanding AI Governance in Salesforce

It’s more than just toggling an AI feature into autopilot – it’s about understanding the ripple effects.

- “Why did this lead get a high score?”

- “How do I know I can trust these predictions?”

- “What do I do if the score doesn’t feel right?”

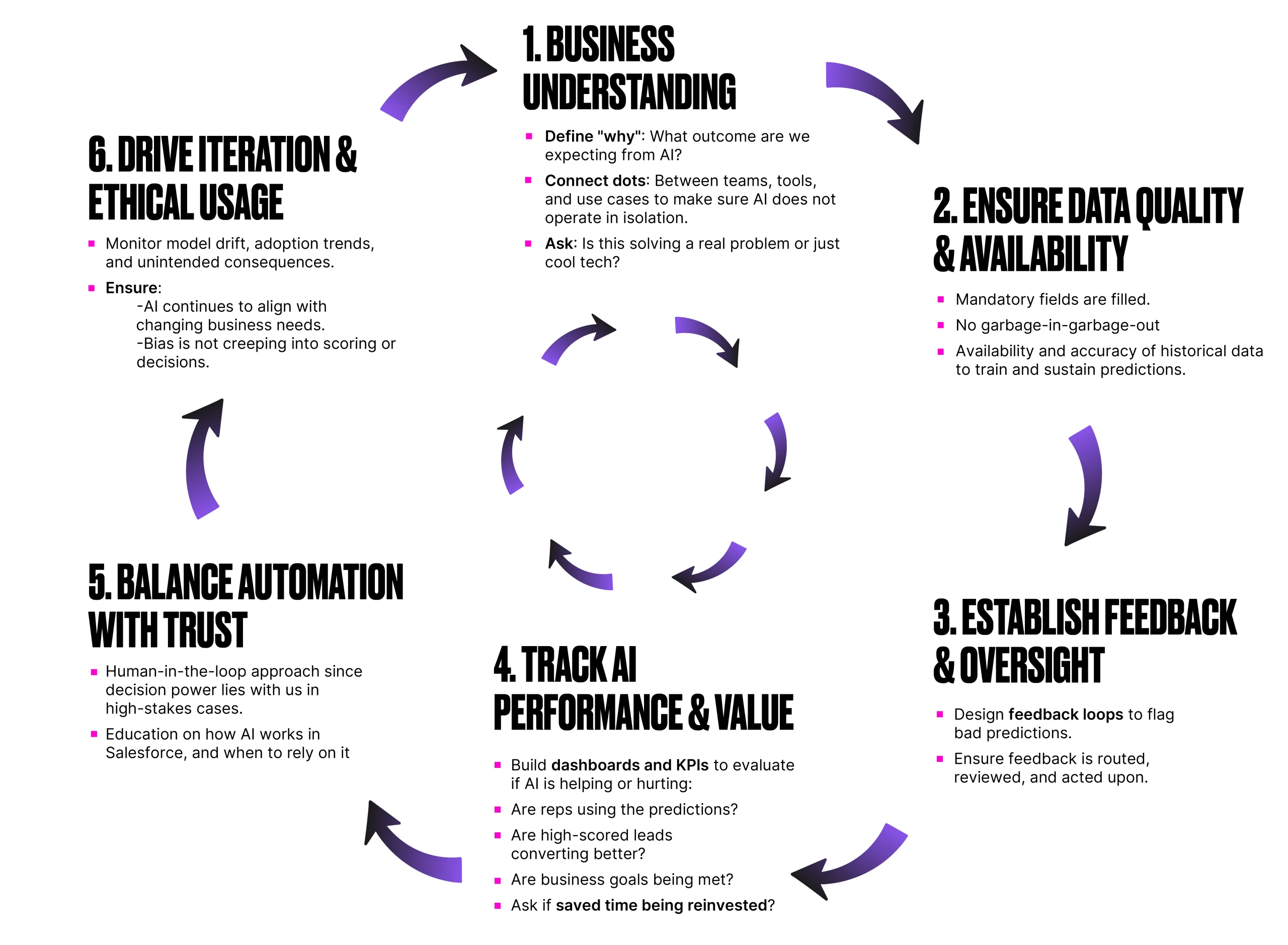

Not only model metrics, but also truthful governance will help in value realization, behavioral shifts, and accountability. It may come from understanding how AI affects processes, decisions, and user behavior, evaluating whether the insights complement human talent – not just producing scores but asking: did we achieve what we hoped? And if yes, what did it change?

Most importantly, “Where is the saved time going?”

This requires setting clear goals and tracking real-world outcomes – like time-to-first-touch, calendar behaviors (more meetings? better follow-ups?), and manager insights on whether reps are truly spending more time selling. So, understanding AI governance in your Salesforce org will be a human and technology decision.

Our Role In AI Governance

We are in a powerful position to define what “good” looks like – setting the right KPIs, enabling feedback loops, and guiding responsible adoption. Using native Salesforce tools like custom objects, flows, and reports, we can build structured oversight while keeping focus on what truly matters, and letting go of what doesn’t.

Track the below metrics to reflect not just technical accuracy, but also how well AI is integrated into human workflows and trusted across the org.

- Lead Conversion Rate by Score: Gauge predictive accuracy by measuring how often high-scoring leads convert.

- Adoption Rate: Assess usage and trust by tracking how frequently users rely on the scores in their decision-making.

- Feedback Volume: Measure engagement with the governance loop by monitoring how often users submit feedback.

- Score Disagreement Rate: Identify trust gaps by tracking how often users flag scores as “off” or inaccurate.

- Model Drift Monitoring: Ensure consistency over time by observing shifts in model performance and behavior.

In case of launching AI across markets, an ideal way is to take a phased approach:

After each phase, run a brief retrospective:

- What worked? What didn’t? What needs local adaptation?

Since AI governance is an ongoing discipline, the intensity of monitoring can align with your project’s lifecycle:

| Phase | Duration | Frequency of Review |

|---|---|---|

| Launch Phase | 0–3 months | Weekly |

| Stabilization | 3–9 months | Monthly |

| Business-as-Usual | 9–12+ months | Quarterly/Annual |

Trigger events like new lead sources, sales processes, or regulatory changes should restart the review cycle, ensuring governance remains relevant and responsive.

AI Governance Checklist for Salesforce Projects

To help operationalize governance, here’s a practical checklist you can embed in your BA toolkit, project lifecycle, or sprint ritual. You can download it here.

Practical Tips for Implementing AI Governance in Salesforce Projects

We may use Salesforce-native tools to operationalize responsible AI:

- Capture feedback with a custom object since users require a structured way to log concerns like “this score seems wrong” or “the recommendation missed context”. Place a quick action on a relevant record (Lead, Opportunity) to submit feedback. You may also use record type in case you want to distinguish feedback types (e.g., Lead Scoring, Next Best Action, Forecasting).

- Make feedback actionable with record-triggered flows by either sending an email or creating a task with priority and assignment rules.

- Visualise trends with dynamic dashboards for localized insight. You may report on Volume of AI feedback by region/team/model, common feedback reasons (too high, too low, irrelevant), Conversion rate by score band, and per cent of leads flagged vs. total scored.

- Maintain change logs for traceability to know changes and ownerships.

- Alert on anomalies for faster course correction. Scheduled flows or reports may help you to alert, for example, if the conversion rate of high-scoring leads drops significantly, track if no feedback is submitted in a month (sign of disengagement), or flag low adoption if high-score leads are untouched after seven days.

Summary

Baking AI governance into user stories, tracking methods, and review rituals is a way to create AI that is ethical and audit-ready. Over time, this approach builds lasting organizational trust, ensuring governance is foundational and not an afterthought. The goal is that users can trust insights, customers are treated equally, and leadership feels confident to scale automation.

The topic might sound abstract at first, but it is only accountability in action. Simply asking:

“Is this AI behaving in a way I’d be proud to defend?”

can help you shift from passively enabling AI to actively guiding it. The job is to turn governance from the above checklist into a mindset, grounded in how we design, build, and review every AI-powered experience in Salesforce.