Maria has been a benefits caseworker for eleven years. She knows the system better than most – but the system doesn’t work as well as she does. Which forms get flagged? Which policies changed last quarter? Which cases need extra attention?

What slows her down isn’t the work. It’s everything around it. Every morning starts with the same friction. A legacy database that takes minutes to load. Policy documents that are buried across systems that don’t talk to each other. A growing queue of unresolved cases that has nothing to do with her capability and everything to do with the infrastructure she depends on.

This is the reality across public sector organizations today, and is exactly where most conversations about AI start going wrong.

Salesforce’s Agentforce for Public Sector is being positioned as the answer – and to be fair, it solves a real part of the problem. It introduces a new operating model where AI agents can assist, automate, and scale government services.

But there’s a detail that doesn’t get enough attention. The technology itself is only a small part of the equation. Most of the work sits in how it’s deployed, what data it runs on, and how well the surrounding systems are designed to support it. Get those wrong, and the system doesn’t improve outcomes. It just produces faster, more confident mistakes.

What Agentforce Actually Changes

Agentforce isn’t a chatbot upgrade. Thinking of it that way is where most implementations start going off track. What Salesforce is building here is a shift from reactive systems to agentic systems. Instead of waiting for input and returning a response, these agents interpret context, decide what needs to happen, execute actions across systems, and adjust based on what they find.

That shift matters because public sector environments are rarely clean or predictable. Requests don’t follow neat decision trees. Data is incomplete. Policies evolve. Edge cases are the norm, not the exception.

Agentforce is designed to operate in that kind of environment. Built on Public Sector Solutions and Data 360 (formerly Data Cloud), it already understands common government workflows like benefits processing, complaint management, and permit handling. That reduces the need to start from scratch.

Salesforce has also positioned Agentforce as part of a broader push toward enterprise AI, with secure deployment models and compliance-ready infrastructure for government use. But speed at the start doesn’t guarantee success later. It just means you reach the real challenges sooner.

Why the Atlas Reasoning Engine Changes the Game

The behavior of Agentforce comes down to what sits underneath it – the Atlas Reasoning Engine. Earlier AI systems were optimized for speed. They generated quick responses, but they struggled the moment a request became complex or required context beyond what was explicitly provided.

In controlled demos, they performed well. In real environments, they broke. Atlas takes a different approach. Instead of jumping straight to an answer, it retrieves relevant data, evaluates whether that data actually applies, and checks its own response before returning it. This extra layer of reasoning is what reduces hallucinations and improves reliability in high-stakes scenarios.

Salesforce has indicated that early internal testing showed measurable improvements in response relevance and accuracy compared to traditional approaches. What matters more than the numbers, though, is how the system behaves.

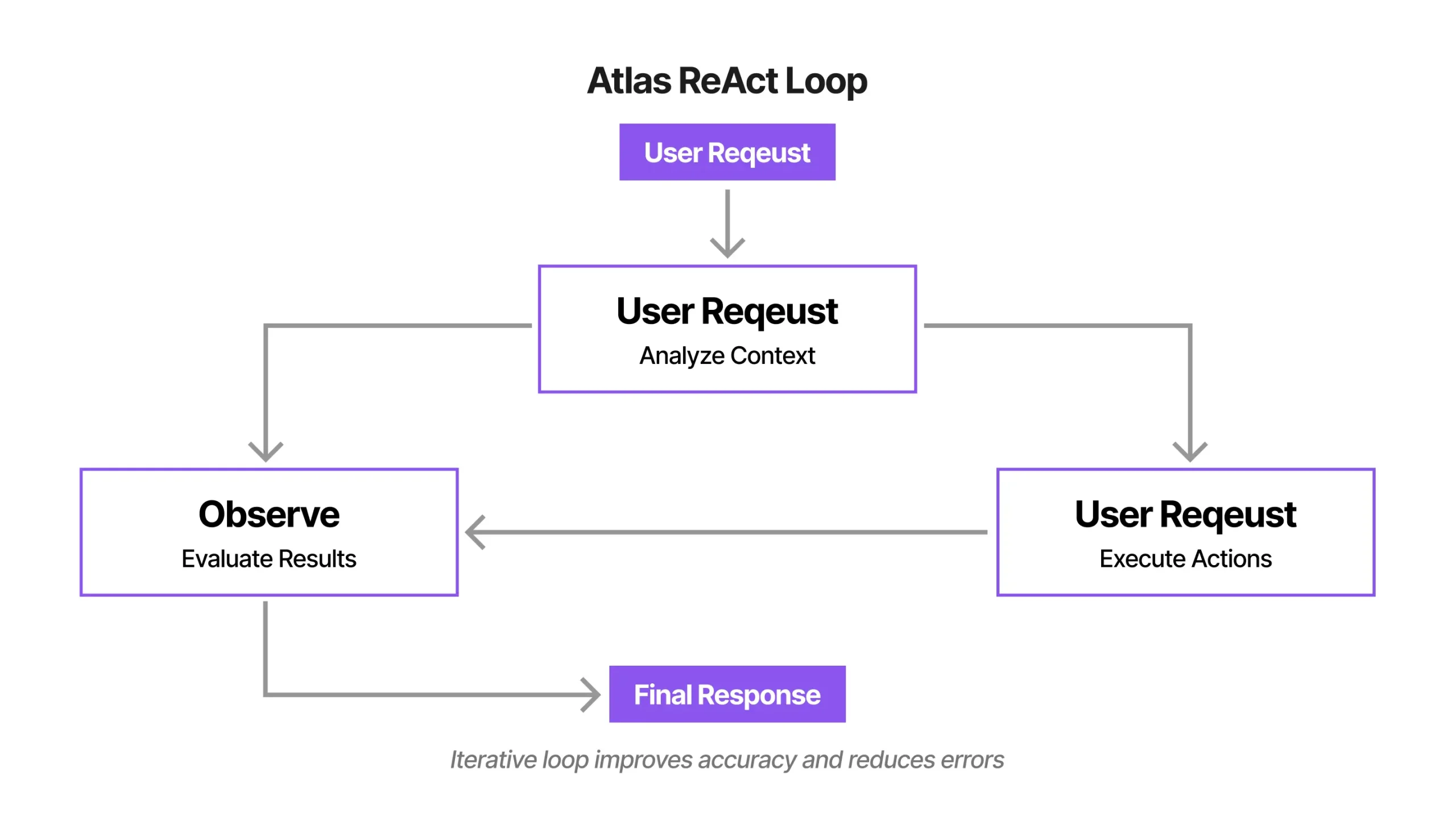

It doesn’t follow a fixed path. It moves through a loop of reasoning, acting, and observing. If something doesn’t align, it adjusts. If information is missing, it asks for more context. That ability to iterate mid-task is what allows it to function in real-world conditions where inputs are rarely perfect.

To understand how this works in practice, here’s a simplified view of the reasoning loop:

But better reasoning doesn’t remove the need for strong architecture. It makes weak architecture fail faster.

Where Most Implementations Actually Break

On paper, the way Agentforce operates is straightforward. A request comes in and gets mapped to a defined topic. The system builds context using available data, prior interactions, and configured instructions. Based on that, it determines what actions to take – triggering Flows, calling APIs, or orchestrating processes through OmniStudio.

The output is evaluated against guardrails before it reaches the user. In reality, this flow exposes every weakness in the system it sits on.

If topics are poorly defined, the agent makes the wrong decisions. If workflows aren’t modular or accessible, the agent can’t execute what it has reasoned. If data is incomplete or inconsistent, the output reflects that immediately.

This is why so many deployments stall. Not because the AI isn’t capable, but because the foundation it relies on wasn’t designed for this level of orchestration. Agentforce doesn’t fix broken systems. It surfaces them.

Compliance Is Not the Finish Line

There’s a common assumption that once a platform is compliant, the hard part is done. Salesforce has achieved FedRAMP High authorization for Agentforce and related products, allowing it to be used in environments handling sensitive government data. That’s important.

But it’s also misunderstood. FedRAMP validates the platform infrastructure. It doesn’t validate how an individual organization configures or uses that platform. The responsibility for compliant deployment still sits with the agency. This is where things start to get complicated.

To make this clearer, here’s how the key Government Cloud environments differ:

| Environment | Compliance Coverage | Best For |

| Government Cloud Plus | FedRAMP High, IRS 1075, DoD IL2 | Civilian agencies, state, and local governments |

| Government Cloud Plus – Defense | FedRAMP High, DoD IL4, DoD IL5 | Defense agencies, military, contractors handling CUI |

Choosing the wrong environment, enabling features without understanding their compliance scope, or misaligning data classifications can introduce gaps that only become visible during audits. By that point, the issue isn’t technology. It’s the decisions made during implementation. The same applies to governance.

Updated federal guidance around AI requires clear accountability, visibility into how AI is used, and structured risk management practices. The platform can support these requirements, but it doesn’t enforce them. That responsibility doesn’t go away with better technology.

Why Trust Architecture Matters More Than Features

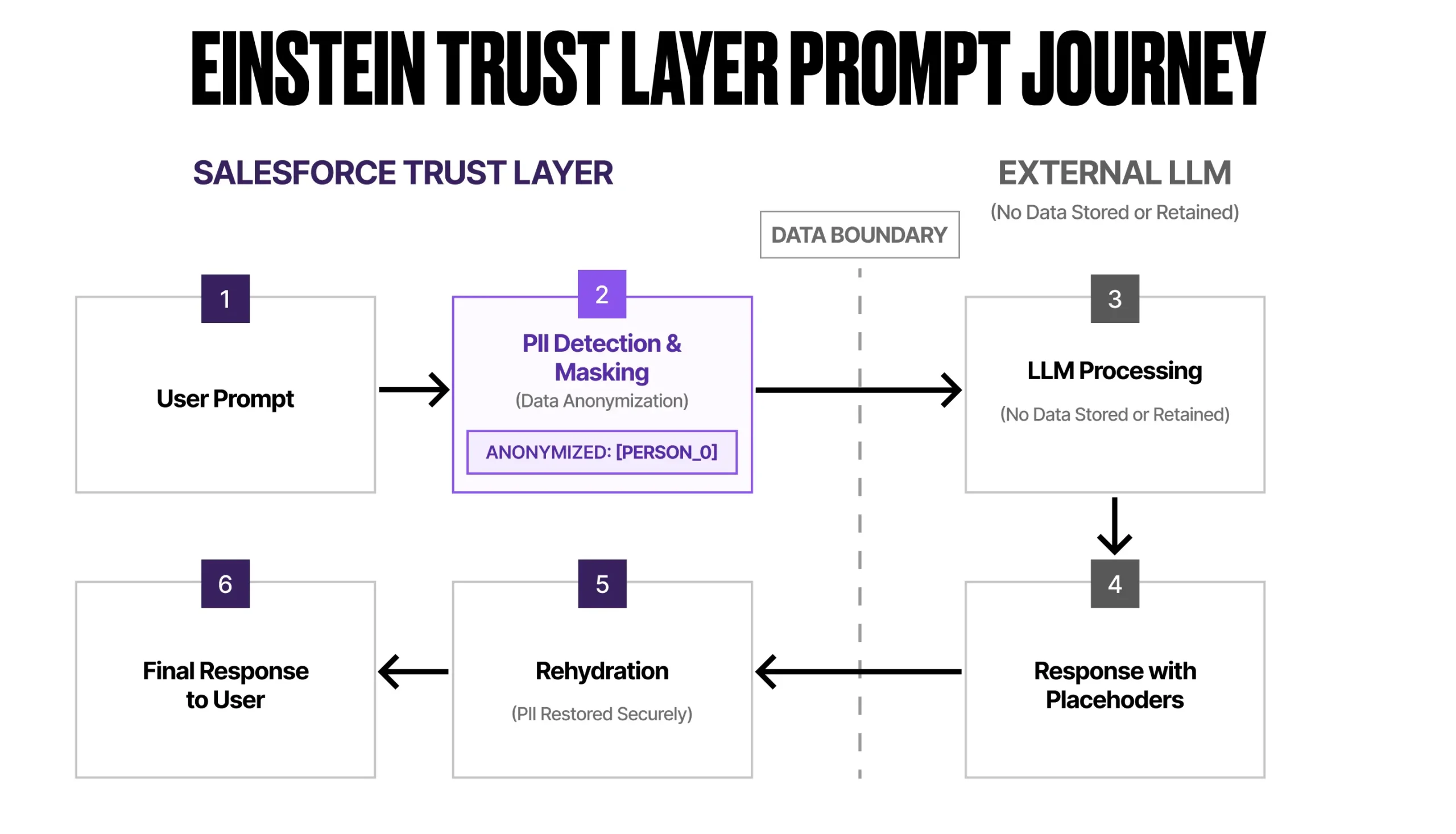

Security is one of the biggest barriers to AI adoption in the public sector, and for good reason. Salesforce addresses this through its Trust Layer, which introduces mechanisms like zero data retention, PII masking, and permission-aware data grounding. These controls are designed to ensure that sensitive information isn’t exposed and that responses remain aligned with user access levels.

Here’s how the Trust Layer handles sensitive data step by step:

According to Salesforce, prompts sent to external models are masked, and no customer data is retained outside the platform environment.

These safeguards are critical. But they don’t compensate for poor implementation. If permissions are misconfigured or data access isn’t clearly defined, the system will still operate within those boundaries – even if those boundaries are flawed.

The technology enforces rules. It doesn’t question whether those rules are correct. Trust isn’t created by features alone. It comes from how those features are implemented and governed.

The Data Problem Behind Every AI Conversation

At some point, every Agentforce project runs into the same issue. The data isn’t ready. It’s fragmented across systems, stored in inconsistent formats, and often tied to legacy infrastructure that was never designed to integrate with modern platforms.

Some of it lives in structured databases. Some of it sits in documents. Some of it requires manual interpretation. An AI system can only operate on what it can access. If that access is incomplete, the output is incomplete as well. Most successful implementations take a phased approach.

They start by connecting a limited set of reliable data sources and building narrowly scoped use cases that deliver immediate value. In parallel, they work toward a broader data strategy using Data 360 and integration layers like MuleSoft to unify systems over time. Salesforce positions Data 360 as a way to create a unified view across disparate systems, enabling more accurate and context-aware AI responses.

That positioning is accurate. But it also comes with a reality that isn’t always emphasized. Data unification takes time. It requires coordination across teams, investment in integration, and a clear understanding of how data flows across the organization. There isn’t a shortcut around it.

The Role of Workflows That Gets Overlooked

There’s a tendency to focus heavily on how intelligent the agent is, and not enough on how work actually gets done.

Agentforce can determine what needs to happen. But it still relies on existing workflows to execute those decisions. That means the underlying processes need to be modular, accessible, and designed for reuse. This is where OmniStudio, Flows, and Apex come together as the operational layer that the agent interacts with.

If that layer isn’t structured properly, the system reaches a point where it knows what to do but has no reliable way to do it. This is where traditional Salesforce architecture principles start to matter more, not less. The difference is that now their impact is immediate and visible.

What Success Actually Looks Like

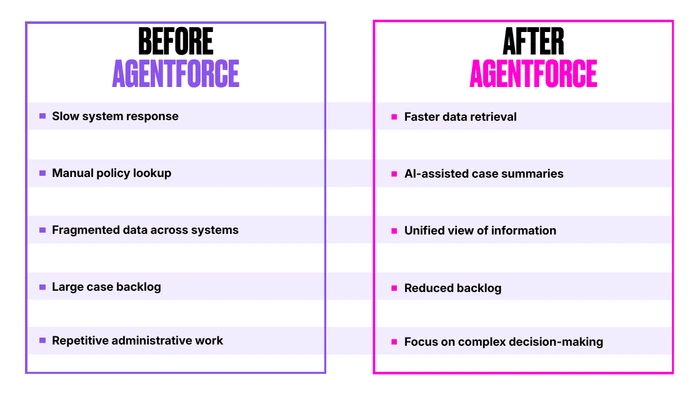

When the pieces come together, the change is noticeable. Administrative overhead starts to reduce. Response times improve. Caseworkers spend less time navigating systems and more time focusing on cases that require judgment. The goal isn’t to remove people from the process. It’s to remove the friction that prevents them from doing their work effectively.

The difference becomes clearer when you compare a typical day before and after introducing Agentforce:

That shift is already happening in parts of the public sector, where AI is handling routine interactions and allowing teams to focus on more complex, human-centered work. The results don’t come from the technology alone. They come from how that technology is integrated into the broader system.

Where Organizations Actually Stand Today

Interest in AI adoption is growing across public sector organizations, but readiness is uneven. Many teams are still building the foundations required to support these systems, whether that’s data infrastructure, governance frameworks, or internal expertise. Others are further along, experimenting with production use cases and scaling their deployments.

The gap between these groups isn’t access to technology. It’s how prepared they are to use it effectively.

Final Thoughts

Agentforce is a powerful platform. That part is clear. What’s less clear, and often overlooked, is that it doesn’t operate independently. It reflects the system it’s placed in. If the underlying environment is fragmented, the output reflects that fragmentation. If workflows are unclear, the system struggles to act. If governance is weak, risks increase. But when those foundations are in place, the results look different.

Maria still has the same experience. The same understanding of her work. What changes is how much time she spends navigating the system versus actually helping people. That’s the outcome that matters. And it has very little to do with the AI alone, and everything to do with how it’s implemented.