AI has now made it easier than ever to build in Salesforce. Tasks that once took hours can now be done in minutes, whether that’s writing a formula, generating Apex, or spinning up proof of concepts. This change is a clear win for many teams. It’s faster, more accessible, and in some cases, opens the door for some people to do things they simply couldn’t do before – ability or capacity-wise.

But there’s also a trade-off that is surfacing just as quickly. In a previous article, I looked at how AI agents are already introducing real financial risk for Salesforce customers when things go wrong at scale. What we haven’t looked at yet is where that risk actually begins. In many cases, it starts much earlier – during the build process itself.

If AI is now helping write the logic that powers orgs, the big question that follows is: When something breaks, exposes data, or quietly introduces long-term issues, who is actually responsible for it?

AI Is Just a Tool, But a Very Dangerous One

One of the easiest ways to frame AI in Salesforce development is to call it what it is – a tool. That’s how it’s positioned, and it’s how most people justify using it. After all, the ecosystem has always been built on tools that help people move faster.

As my colleague Tim Combridge wrote in his recent SF Ben article, Why You Should Not Be Vibe Coding Salesforce Flows, the power and capability of AI – or in this case, vibe coding – is incredibly enticing, but is equally as dangerous.

I spoke to Tim for this piece, and as mentioned, a lot of the current value does come from speeding up those everyday tasks.

“Quite often we’ll get an error, and it can often be quite difficult to see what is broken,” Tim explained. “I’ve also basically forgotten how to write a formula myself because for the last three years, guess who’s been doing it? Not me. It’s been some form of LLM, I’m getting lazy!”

This is the natural upside of AI – faster troubleshooting, quicker builds, and a lot less time spent on repetitive work. The risks are what sit underneath that speed.

As Tim explained: “Vibe coding tools are great. In the wrong hands, as with anything, they can get quite dangerous. It’ll grab this data, it’ll display it… but it also does some other things that a developer… would know to check.”

That’s where problems start to arise more frequently. You can build something that might look right and work on the surface, but present issues you can’t see. Tim used a security example, where a component might display data correctly, but if it’s running in the wrong context, then it could expose far more than intended.

“If you built it wrong, somebody who knows what they’re doing can access your site [and] query whatever data that they want… because you haven’t checked behind the scenes.”

A lot of this may be obvious from the front end, but the risk is hidden in parts that haven’t been properly reviewed. This then gets to the core issue at play – AI lowers the barriers to building, but not the barrier to understanding what’s actually being built.

There’s also a tendency to trust the output because it works, but as Tim pointed out to me: “Just because it looks like it’s doing what it’s supposed to… doesn’t mean that we’re good to go. We need to do thorough testing.

“It’s still a tool. It’s not the tool’s fault if something goes wrong.”

So at an individual level, not much has changed – if you’re using AI to build something in Salesforce, you own what it produces. What may have changed is how easy it is to build something that goes a bit beyond your level of experience. Once that happens, the responsibility becomes much harder to understand and manage.

The Responsibility Doesn’t Stop at the Developer

It’s easy to say that the responsibility sits with the person using the tool. If you generate the code, you own the outcome. Simple enough.

However, if it were that simple, then there would be no need for this article! And the reality is simply not that clean.

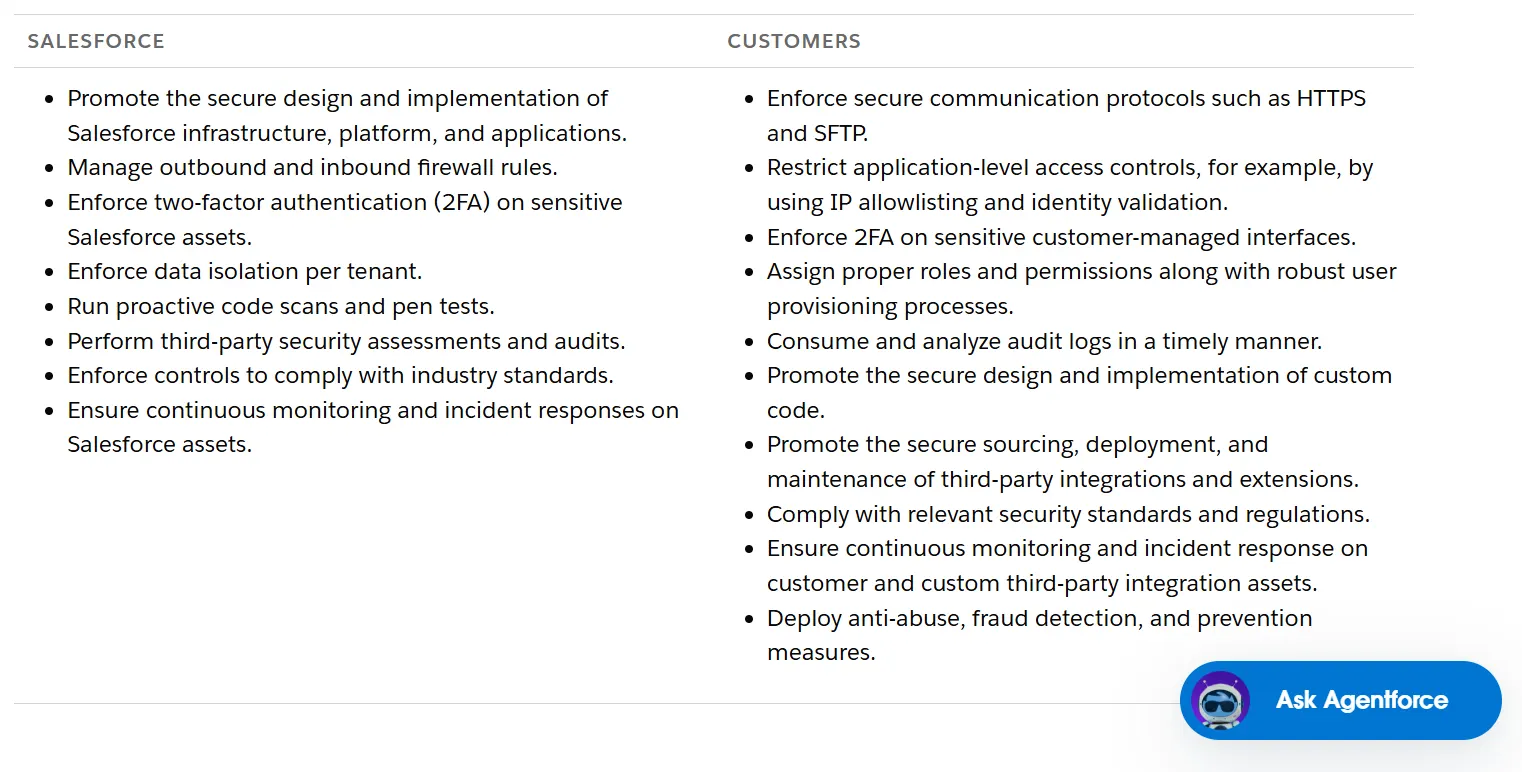

As Salesforce MVP Paul Battisson told SF Ben, responsibility still sits under a shared model – thus not allowing just one person (let’s say, an entry-level admin) to take full ownership.

Now, this doesn’t remove all responsibility from the developer or admin. If you’re using AI to build something, you are still accountable for ensuring it’s correct and fit for purpose. But it does highlight that the way AI is being used inside organizations is rarely just an individual choice. In many cases, it’s being driven from the top down.

“There are organizations now where people are being incentivized from the top down to use AI,” Paul explained. “They’re almost measuring people’s use of AI as a productivity measure.”

If teams are being pushed to use AI more, build faster, and deliver quicker outputs, then the risk isn’t just tied to the person writing the code. It’s also then tied to the environment they’re working in. Speed becomes the priority, and subsequently, review can start to slip.

As Paul put it aptly: “If you’re measuring… how much AI are you using, you will then get people using more AI. And it is thus your fault if people are producing more AI stuff that doesn’t work.”

There are also outstanding questions about skill boundaries. AI makes it easier for admins to generate code and for developers to move faster than before. But it doesn’t remove the need for experience.

Paul used an interesting analogy to describe the importance of experience in this scenario.

“It’s like preparing a blowfish. There’s a small part that’s safe to eat, but the rest is poisonous. If you’re not trained, you shouldn’t be touching it. It’s the same with AI. If you’re using it to generate something you don’t fully understand, you need to bring in someone who does to make sure it’s secure and built properly.”

In other words, just because a tool makes something possible, it doesn’t mean it’s safe to do without that right level of understanding. If you lack understanding, then it should be escalated to the right person.

This is then where teams start to matter more than individuals. The reality is that most Salesforce implementations already rely on collaboration between roles. Admins, developers, architects, and stakeholders all have their say in how a solution is built, and AI doesn’t remove that need. If anything, it begins to increase it more.

It also ties back to something that’s already becoming a problem across organizations, which is visibility.

In my previous article on AI agents, one of the biggest risks came down to systems acting at scale without proper oversight, and the same pattern applies here. If teams are building faster with AI, but governance and review processes aren’t keeping up, then risk starts to accumulate quietly in the background.

So while the developer or admin may own the immediate output, they’re not the only ones responsible for the outcome.

The Risks Nobody Is Fully Accounting For Yet

It seems as though most AI-related issues in Salesforce won’t come from something obviously broken, but from things that actually work – and that’s what makes them harder to identify.

Take security, which we mentioned earlier. A component might behave as expected but introduce risk behind the scenes. As Tim explained: “Security is my big concern. If you are not a developer and you do not understand what the code does, you wouldn’t think to check it.”

So while it may look correct to the naked eye, the biggest risks are going to be what hasn’t been reviewed thoroughly by the right people.

The same applies to technical debt. As most are aware, AI isn’t the root cause, but it does increase the volume of what’s being built.

“Is AI making technical debt worse? No,” Paul said. “Is the person using AI making the technical debt worse? Possibly!”

All this output means more chances for duplication, inconsistency, and poor decisions. Whether that turns into long-term problems will likely depend on how much of it is actually understood.

Also, if teams start relying too heavily on AI to generate code or solutions, naturally, fewer people will have a full understanding of how those systems really work. And in Salesforce – where complexity already builds quickly – that’s a significant problem.

“AI is not a replacement for your brain. If you want to understand what’s been done, you need to do it yourself,” Paul said. “You still need documentation written by humans, understood by humans.”

If you don’t have that shared understanding, knowledge stays isolated, and risk increases.

Final Thoughts

So who actually owns the risk? In reality, it’s easier to answer who doesn’t. The tool doesn’t. The AI doesn’t. And pointing fingers at a single person rarely tells the full story here.

Much like the hacking cases we’ve seen across the Salesforce ecosystem, this isn’t about blame, especially when newer admins or less experienced team members are potentially involved. What matters more is educating and raising awareness.

Arguably, there still needs to be a clear understanding of how these tools should be used at all levels – junior, mid-level, and senior. Not just because of the risks to the business, but because of the impact on the people using them. There are already growing concerns about over-reliance on AI and what that does to problem-solving and critical thinking.

AI can be incredibly useful, but it’s still just a tool. Treating it that way, rather than something that replaces judgment, is what will prevent most of these issues before they happen.